Object detection is a computer technology related to computer vision and image processing that deals with detecting instances of semantic objects of a certain class (such as humans, buildings, or cars) in digital images and videos. [1] Well-researched domains of object detection include face detection and pedestrian detection. Object detection has applications in many areas of computer vision, including image retrieval and video surveillance.

Uses

It is widely used in computer vision tasks such as image annotation, [2] vehicle counting, [3] activity recognition, [4] face detection, face recognition, video object co-segmentation. It is also used in tracking objects, for example tracking a ball during a football match, tracking movement of a cricket bat, or tracking a person in a video.

Often, the test images are sampled from a different data distribution, making the object detection task significantly more difficult. [5] To address the challenges caused by the domain gap between training and test data, many unsupervised domain adaptation approaches have been proposed. [5] [6] [7] [8] [9] A simple and straightforward solution of reducing the domain gap is to apply an image-to-image translation approach, such as cycle-GAN. [10] Among other uses, cross-domain object detection is applied in autonomous driving, where models can be trained on a vast amount of video game scenes, since the labels can be generated without manual labor.

Concept

Every object class has its own special features that help in classifying the class – for example all circles are round. Object class detection uses these special features. For example, when looking for circles, objects that are at a particular distance from a point (i.e. the center) are sought. Similarly, when looking for squares, objects that are perpendicular at corners and have equal side lengths are needed. A similar approach is used for face identification where eyes, nose, and lips can be found and features like skin color and distance between eyes can be found.

Methods

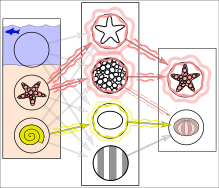

In reality, textures and outlines would not be represented by single nodes, but rather by associated weight patterns of multiple nodes.

Methods for object detection generally fall into either neural network-based or non-neural approaches. For non-neural approaches, it becomes necessary to first define features using one of the methods below, then using a technique such as support vector machine (SVM) to do the classification. On the other hand, neural techniques are able to do end-to-end object detection without specifically defining features, and are typically based on convolutional neural networks (CNN).

- Non-neural approaches:

- Neural network approaches:

See also

- Feature detection (computer vision)

- Moving object detection

- Small object detection

- Outline of object recognition

- Teknomo–Fernandez algorithm

References

- ^ Dasiopoulou, Stamatia, et al. " Knowledge-assisted semantic video object detection." IEEE Transactions on Circuits and Systems for Video Technology 15.10 (2005): 1210–1224.

- ^ Ling Guan; Yifeng He; Sun-Yuan Kung (1 March 2012). Multimedia Image and Video Processing. CRC Press. pp. 331–. ISBN 978-1-4398-3087-1.

- ^ Alsanabani, Ala; Ahmed, Mohammed; AL Smadi, Ahmad (2020). "Vehicle Counting Using Detecting-Tracking Combinations: A Comparative Analysis". 2020 the 4th International Conference on Video and Image Processing. pp. 48–54. doi: 10.1145/3447450.3447458. ISBN 9781450389075. S2CID 233194604.

- ^ Wu, Jianxin, et al. " A scalable approach to activity recognition based on object use." 2007 IEEE 11th international conference on computer vision. IEEE, 2007.

- ^ a b Oza, Poojan; Sindagi, Vishwanath A.; VS, Vibashan; Patel, Vishal M. (2021-07-04). "Unsupervised Domain Adaptation of Object Detectors: A Survey". arXiv: 2105.13502 [ cs.CV].

- ^ Khodabandeh, Mehran; Vahdat, Arash; Ranjbar, Mani; Macready, William G. (2019-11-18). "A Robust Learning Approach to Domain Adaptive Object Detection". arXiv: 1904.02361 [ cs.LG].

- ^ Soviany, Petru; Ionescu, Radu Tudor; Rota, Paolo; Sebe, Nicu (2021-03-01). "Curriculum self-paced learning for cross-domain object detection". Computer Vision and Image Understanding. 204: 103166. arXiv: 1911.06849. doi: 10.1016/j.cviu.2021.103166. ISSN 1077-3142. S2CID 208138033.

- ^ Menke, Maximilian; Wenzel, Thomas; Schwung, Andreas (October 2022). "Improving GAN-based Domain Adaptation for Object Detection". 2022 IEEE 25th International Conference on Intelligent Transportation Systems (ITSC). pp. 3880–3885. doi: 10.1109/ITSC55140.2022.9922138. ISBN 978-1-6654-6880-0. S2CID 253251380.

- ^ Menke, Maximilian; Wenzel, Thomas; Schwung, Andreas (2022-08-31). "AWADA: Attention-Weighted Adversarial Domain Adaptation for Object Detection". arXiv: 2208.14662 [ cs.CV].

- ^ Zhu, Jun-Yan; Park, Taesung; Isola, Phillip; Efros, Alexei A. (2020-08-24). "Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networks". arXiv: 1703.10593 [ cs.CV].

-

^ Ferrie, C., & Kaiser, S. (2019). Neural Networks for Babies. Sourcebooks.

ISBN

1492671207.

{{ cite book}}: CS1 maint: multiple names: authors list ( link) - ^ Dalal, Navneet (2005). "Histograms of oriented gradients for human detection" (PDF). Computer Vision and Pattern Recognition. 1.

- ^ Ross, Girshick (2014). "Rich feature hierarchies for accurate object detection and semantic segmentation" (PDF). Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. IEEE. pp. 580–587. arXiv: 1311.2524. doi: 10.1109/CVPR.2014.81. ISBN 978-1-4799-5118-5. S2CID 215827080.

- ^ Girschick, Ross (2015). "Fast R-CNN" (PDF). Proceedings of the IEEE International Conference on Computer Vision. pp. 1440–1448. arXiv: 1504.08083. Bibcode: 2015arXiv150408083G.

- ^ Shaoqing, Ren (2015). "Faster R-CNN". Advances in Neural Information Processing Systems. arXiv: 1506.01497.

- ^ a b Pang, Jiangmiao; Chen, Kai; Shi, Jianping; Feng, Huajun; Ouyang, Wanli; Lin, Dahua (2019-04-04). "Libra R-CNN: Towards Balanced Learning for Object Detection". arXiv: 1904.02701v1 [ cs.CV].

- ^ Liu, Wei (October 2016). "SSD: Single Shot MultiBox Detector". Computer Vision – ECCV 2016. Lecture Notes in Computer Science. Vol. 9905. pp. 21–37. arXiv: 1512.02325. doi: 10.1007/978-3-319-46448-0_2. ISBN 978-3-319-46447-3. S2CID 2141740.

- ^ Zhang, Shifeng (2018). "Single-Shot Refinement Neural Network for Object Detection". Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. pp. 4203–4212. arXiv: 1711.06897. Bibcode: 2017arXiv171106897Z.

- ^ Lin, Tsung-Yi (2020). "Focal Loss for Dense Object Detection". IEEE Transactions on Pattern Analysis and Machine Intelligence. 42 (2): 318–327. arXiv: 1708.02002. Bibcode: 2017arXiv170802002L. doi: 10.1109/TPAMI.2018.2858826. PMID 30040631. S2CID 47252984.

- ^ Zhu, Xizhou (2018). "Deformable ConvNets v2: More Deformable, Better Results". arXiv: 1811.11168 [ cs.CV].

- ^ Dai, Jifeng (2017). "Deformable Convolutional Networks". arXiv: 1703.06211 [ cs.CV].

- "Object Class Detection". Vision.eecs.ucf.edu. Archived from the original on 2013-07-14. Retrieved 2013-10-09.

- "ETHZ – Computer Vision Lab: Publications". Vision.ee.ethz.ch. Archived from the original on 2013-06-03. Retrieved 2013-10-09.